To address this issue, a multitude of concurrency libraries and languages are beginning to emerge, including Parallel Extensions to the Microsoft. This shift demands that software developers start to write all of their applications with concurrency in mind in order to benefit from these significant increases in computing power. Unless you've been living under a rock, you've likely heard about the "manycore shift." Processor manufacturers such as Intel and AMD are increasing the power of their hardware by scaling out the number of cores on a processor rather than by attempting to continue to provide exponential increases in clock speed. ISSN 0362-1340.In this article False Sharing Stephen Toub Association for Computing Machinery (ACM). "SHERIFF: precise detection and automatic mitigation of false sharing". Proceedings of the 8th ACM European Conference on Computer Systems. ^ Nanavati, Mihir Spear, Mark Taylor, Nathan Rajagopalan, Shriram Meyer, Dutch T.Proceedings of the 23rd ACM SIGPLAN Symposium on Principles and Practice of Parallel Programming. "Featherlight on-the-fly false-sharing detection". ^ Chabbi, Milind Wen, Shasha Liu, Xu ().^ "Working Draft, Standard for Programming Language C++ ".

"Reducing false sharing on shared memory multiprocessors through compile time data transformations". Sedms'93: USENIX Systems on USENIX Experiences with Distributed and Multiprocessor Systems. "False sharing and its effect on shared memory performance". Computer organization and design: the hardware/software interface. However, these systems incur some execution overhead. There are also systems that both detect and repair false sharing in executing programs. There are tools for detecting false sharing.

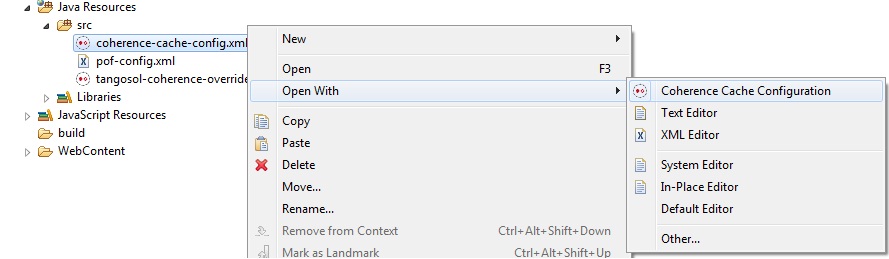

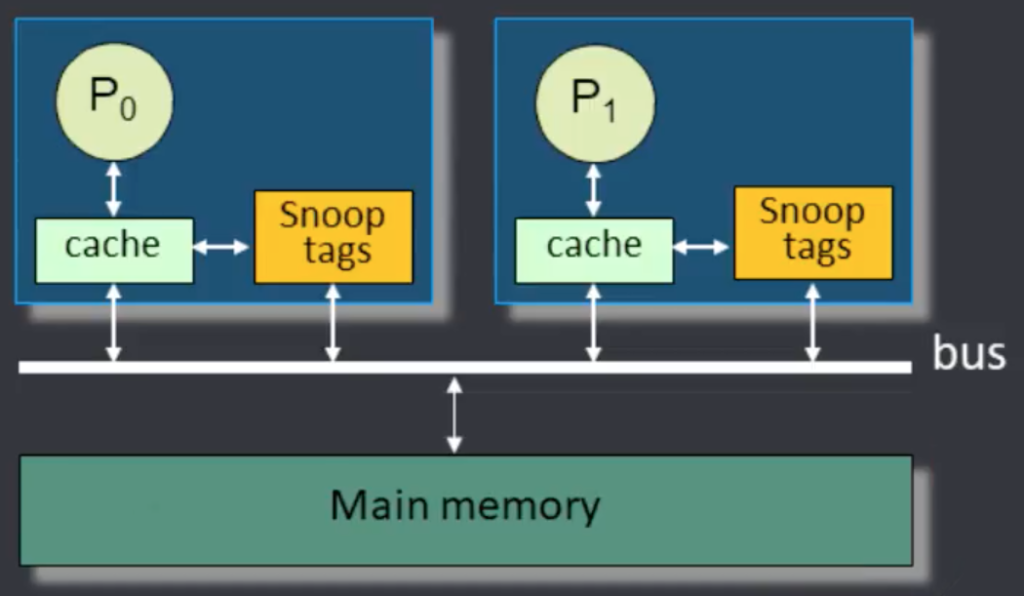

For instance, the C++ programming language standard draft of C++23 mandates that data members must be laid out so that later members have higher addresses. However, some of these transformations may not always be allowed. Compile-time data transformations can also mitigate false-sharing. However, some of these program changes may increase the size of the objects, leading to higher memory use. For instance, false sharing in CPU caches can be prevented by reordering variables or adding padding (unused bytes) between variables. There are ways of mitigating the effects of false sharing. This are the results on a Zen1 system with eight cores and sixteen threads:Īs you can see, on the system in question it can take up to a quarter microsecond to complete an increment operation on the shared cache line, which corresponds to approx. The higher the level of contention between threads, the longer each increment takes. Each thread sequentially increments one byte of a cache line atomically, which as a whole is shared among all threads. It creates an increasing number of threads from one thread to the number of physical threads in the system. This code shows the effect of false sharing. #include #include #include #include #include #include #include using namespace std using namespace chrono #if defined(_cpp_lib_hardware_interference_size) // default cacheline size from runtime constexpr size_t CL_SIZE = hardware_constructive_interference_size #else // most common cacheline size otherwise constexpr size_t CL_SIZE = 64 #endif int main () False sharing is an inherent artifact of automatically synchronized cache protocols and can also exist in environments such as distributed file systems or databases, but current prevalence is limited to RAM caches. In some cases, the elimination of false sharing can result in order-of-magnitude performance improvements. If two processors operate on independent data in the same memory address region storable in a single line, the cache coherency mechanisms in the system may force the whole line across the bus or interconnect with every data write, forcing memory stalls in addition to wasting system bandwidth. The caching system is unaware of activity within this block and forces the first participant to bear the caching system overhead required by true shared access of a resource.īy far the most common usage of this term is in modern multiprocessor CPU caches, where memory is cached in lines of some small power of two word size (e.g., 64 aligned, contiguous bytes). When a system participant attempts to periodically access data that is not being altered by another party, but that data shares a cache block with data that is being altered, the caching protocol may force the first participant to reload the whole cache block despite a lack of logical necessity. In computer science, false sharing is a performance-degrading usage pattern that can arise in systems with distributed, coherent caches at the size of the smallest resource block managed by the caching mechanism.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed